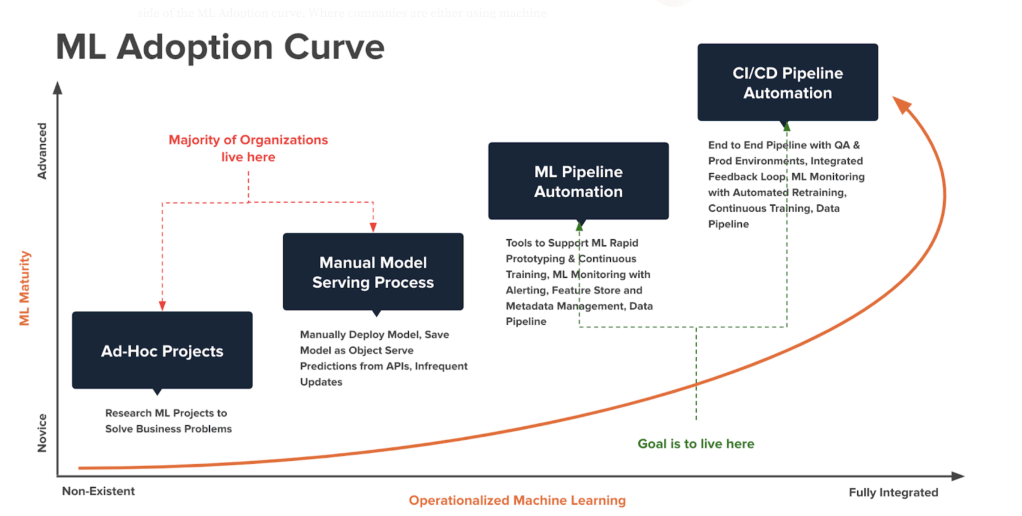

Machine learning (ML) has rapidly become a key component of modern businesses, with organizations using ML algorithms to derive insights, automate processes, and improve decision-making. However, deploying and managing machine learning models in production is often a challenging task that requires close collaboration between data scientists and IT operations teams. This is where MLOps comes in – a set of practices and technologies that aim to streamline the ML lifecycle and bridge the gap between machine learning and operations.

What is MLOps?

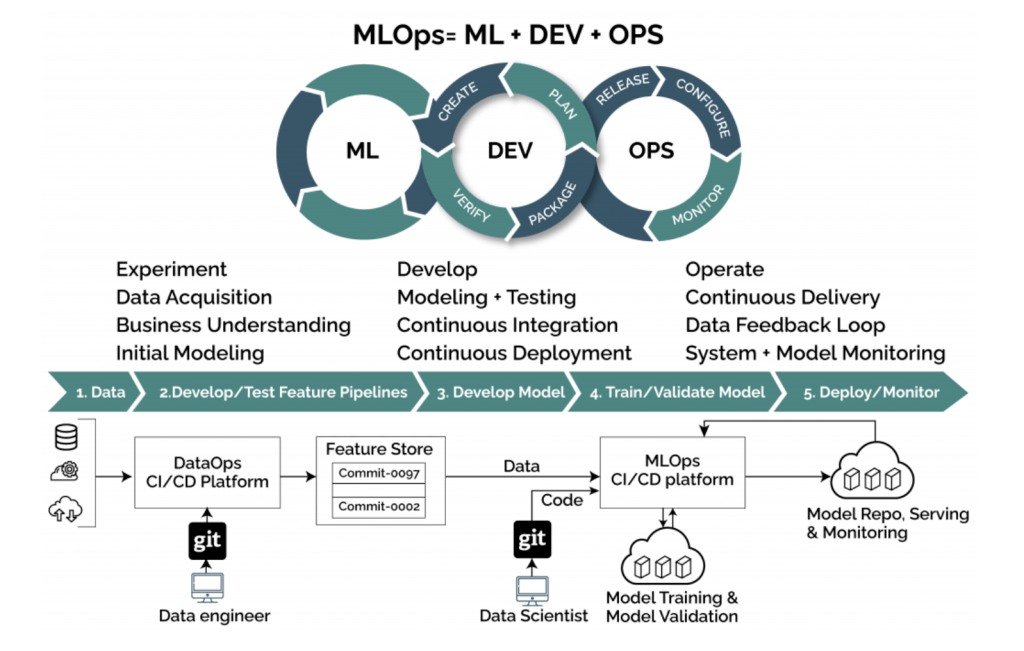

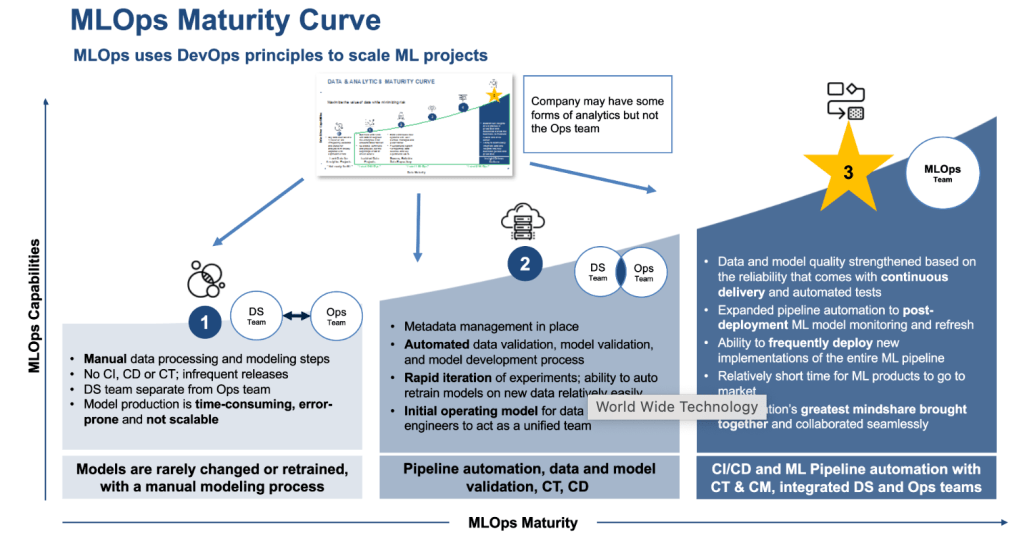

MLOps, short for Machine Learning Operations, is a relatively new term that describes the intersection of machine learning and operations. It encompasses the practices, processes, and technologies used to build, deploy, monitor, and manage machine learning models in production. MLOps borrows from the DevOps culture, which emphasizes collaboration and communication between development and operations teams, as well as the use of automation and continuous integration/continuous delivery (CI/CD) pipelines to streamline software development.

Why is MLOps important?

Deploying and managing machine learning models in production is often a complex and time-consuming task that involves multiple stakeholders and steps, such as data preprocessing, model training, validation, deployment, and monitoring. MLOps helps to address some of the key challenges of this process, such as:

- Versioning and reproducibility: ML models often require specific versions of software libraries and dependencies, and the code used to build them should be versioned and reproducible. MLOps helps to ensure that models are built with the right dependencies and are reproducible, which makes it easier to debug issues and roll back to previous versions if needed.

- Scalability and performance: Machine learning models can be computationally intensive and require large amounts of data and processing power. MLOps helps to ensure that models can scale and perform well in production by using techniques such as distributed training, load balancing, and resource allocation.

- Security and compliance: Machine learning models can contain sensitive data or be used in regulated industries, which means that they need to be secured and compliant with relevant regulations. MLOps helps to ensure that models are deployed securely and that they comply with relevant regulations such as GDPR or HIPAA.

- Monitoring and maintenance: Machine learning models are not static – they need to be constantly monitored and maintained to ensure that they perform well and remain up-to-date. MLOps helps to automate the monitoring and maintenance of models, which frees up data scientists and IT operations teams to focus on more high-value tasks.

MLOps Practices and Technologies

MLOps encompasses a wide range of practices and technologies, depending on the specific needs and context of an organization. However, some of the key practices and technologies used in MLOps include:

- Continuous Integration/Continuous Delivery (CI/CD): CI/CD is a software development practice that emphasizes automation and continuous feedback to improve the speed and quality of software development. In MLOps, CI/CD is used to automate the deployment of machine learning models, as well as to ensure that models are versioned and reproducible.

- Infrastructure as Code (IaC): IaC is a practice that involves managing infrastructure resources (such as servers or databases) using code. In MLOps, IaC is used to automate the provisioning and management of infrastructure resources that are used to train and deploy machine learning models.

- Model Versioning: Model versioning is the practice of tracking changes to machine learning models over time. In MLOps, model versioning is used to ensure that models can be reproduced and that issues can be easily tracked and resolved.

- Automated Testing: Automated testing is the practice of using software tools to automatically test machine learning models. In MLOps, automated

- testing is used to ensure that models meet certain quality standards and that they perform as expected in different scenarios.

- Model Monitoring: Model monitoring is the practice of continuously monitoring the performance of machine learning models in production. In MLOps, model monitoring is used to detect and diagnose issues with models, as well as to identify opportunities for improvement.

- Containerization: Containerization is the practice of packaging software applications (including machine learning models) in lightweight, portable containers that can be run consistently across different environments. In MLOps, containerization is used to simplify the deployment and management of machine learning models, as well as to improve scalability and performance.

MLOps Workflow

The MLOps workflow typically consists of the following steps:

- Data preparation: In this step, data scientists prepare and preprocess the data that will be used to train the machine learning model.

- Model development: In this step, data scientists use machine learning algorithms to train the model on the prepared data. They also evaluate and optimize the model’s performance using techniques such as hyperparameter tuning and cross-validation.

- Model deployment: In this step, the trained machine learning model is deployed to a production environment using MLOps practices and technologies such as CI/CD, IaC, and containerization.

- Model monitoring and maintenance: In this step, the deployed model is continuously monitored and maintained using MLOps practices and technologies such as model monitoring, automated testing, and container orchestration.

MLOps is a rapidly growing field that has become essential for businesses that want to derive value from their machine learning investments. By leveraging MLOps practices and technologies, organizations can streamline the machine learning lifecycle, reduce deployment times, improve performance, and enhance security and compliance. While MLOps is still a relatively new field, it has the potential to transform the way that organizations build and deploy machine learning models, and to drive innovation and growth across industries.